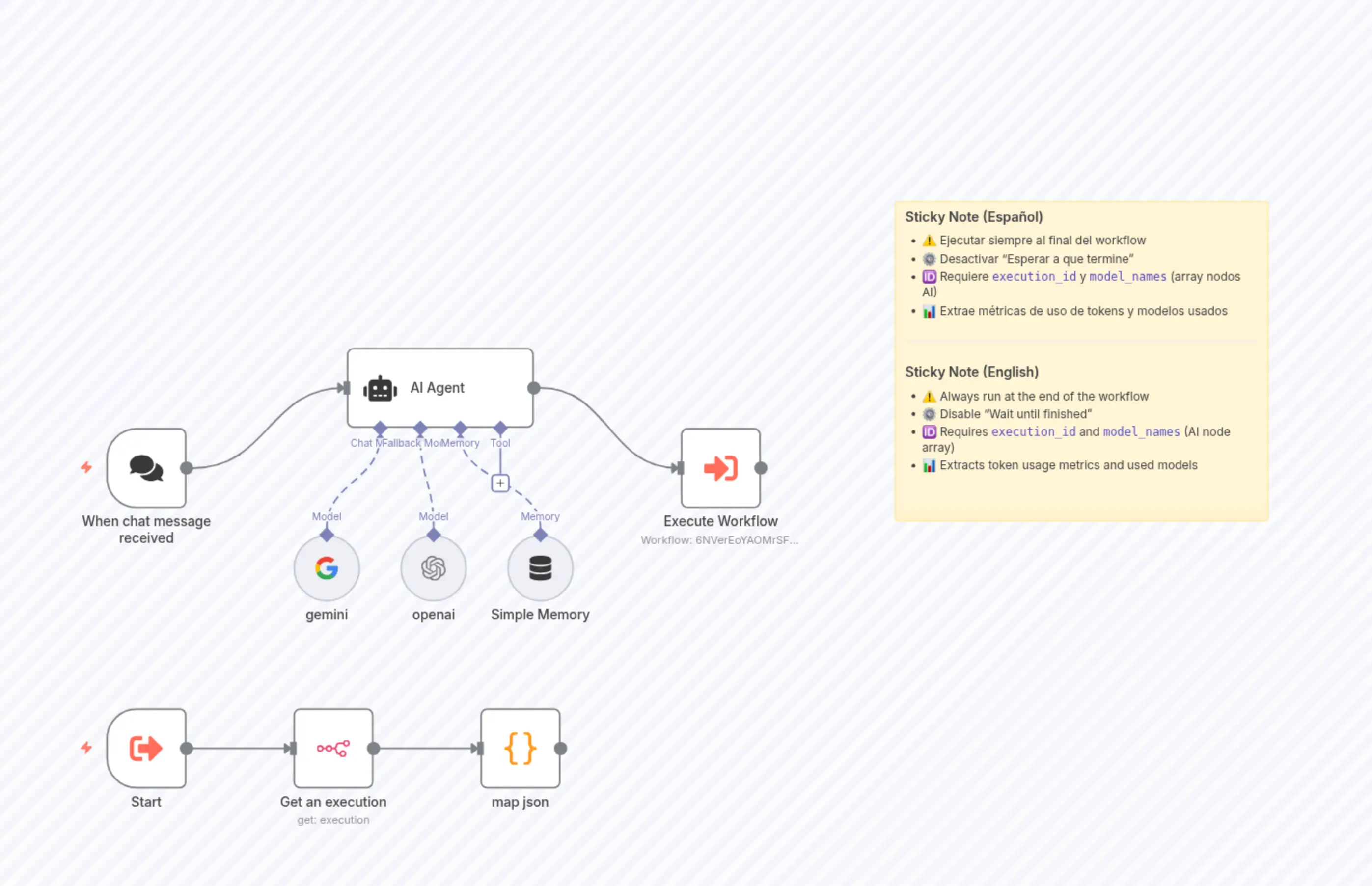

Track and Monitor AI Token Usage Metrics for OpenAI and Gemini Models

描述

分类

🔧 Engineering🤖 AI & Machine Learning

使用的节点

n8n-nodes-base.n8nn8n-nodes-base.coden8n-nodes-base.stickyNote@n8n/n8n-nodes-langchain.agentn8n-nodes-base.executeWorkflow@n8n/n8n-nodes-langchain.chatTrigger@n8n/n8n-nodes-langchain.lmChatOpenAin8n-nodes-base.executeWorkflowTrigger@n8n/n8n-nodes-langchain.lmChatGoogleGemini@n8n/n8n-nodes-langchain.memoryBufferWindow

价格免费

浏览量0

最后更新11/28/2025

workflow.json

{

"meta": {

"instanceId": "7b7dc168a72f181e85df3824a8eb52919ab80580196b0210e9d3fbb2583e0c2f",

"templateCredsSetupCompleted": true

},

"nodes": [

{

"id": "39c42f46-1415-479e-afce-63877743d4c4",

"name": "Get an execution",

"type": "n8n-nodes-base.n8n",

"position": [

160,

224

],

"parameters": {

"options": {

"activeWorkflows": true

},

"resource": "execution",

"operation": "get",

"executionId": "={{ $('Start').item.json.excecution_id }}",

"requestOptions": {}

},

"credentials": {

"n8nApi": {

"id": "Mb6utQVh6KkCeIIo",

"name": "n8n account"

}

},

"typeVersion": 1

},

{

"id": "01eb07a4-4d4d-4abf-b89b-01eef5b995e1",

"name": "Start",

"type": "n8n-nodes-base.executeWorkflowTrigger",

"position": [

-64,

224

],

"parameters": {

"workflowInputs": {

"values": [

{

"name": "excecution_id"

},

{

"name": "model_names",

"type": "array"

}

]

}

},

"typeVersion": 1.1

},

{

"id": "2d313b6b-7bf9-4b90-95ac-868642f1bd35",

"name": "map json",

"type": "n8n-nodes-base.code",

"position": [

384,

224

],

"parameters": {

"jsCode": "const input = $input.all();\nconst data = input[0].json;\n\nconst keysToSearch = $('Start').item.json.model_names;\nconst nodes = data.workflowData?.nodes ?? [];\n\nconst totals = {\n\tcompletionTokens: 0,\n\tpromptTokens: 0,\n\ttotalTokens: 0,\n};\n\nconst models = new Set();\nconst detailedUsages = [];\n\nfunction accumulate(usage, model) {\n\tif (\n\t\ttypeof usage.completionTokens === 'number' &&\n\t\ttypeof usage.promptTokens === 'number' &&\n\t\ttypeof usage.totalTokens === 'number'\n\t) {\n\t\ttotals.completionTokens += usage.completionTokens;\n\t\ttotals.promptTokens += usage.promptTokens;\n\t\ttotals.totalTokens += usage.totalTokens;\n\n\t\tif (model) models.add(model);\n\n\t\tdetailedUsages.push({\n\t\t\tmodel,\n\t\t\tusage: { ...usage },\n\t\t});\n\t}\n}\n\nfunction findTokenUsageRecursively(obj, model) {\n\tif (typeof obj !== 'object' || obj === null) return;\n\n\tif (\n\t\ttypeof obj.completionTokens === 'number' &&\n\t\ttypeof obj.promptTokens === 'number' &&\n\t\ttypeof obj.totalTokens === 'number'\n\t) {\n\t\taccumulate(obj, model);\n\t\treturn;\n\t}\n\n\tif (Array.isArray(obj)) {\n\t\tobj.forEach((item) => findTokenUsageRecursively(item, model));\n\t} else {\n\t\tconst detectedModel = obj.model || model;\n\t\tfor (const key in obj) {\n\t\t\tif (Object.prototype.hasOwnProperty.call(obj, key)) {\n\t\t\t\tfindTokenUsageRecursively(obj[key], detectedModel);\n\t\t\t}\n\t\t}\n\t}\n}\n\nfunction processExecution(exec, nodeName) {\n\tconst node = nodes.find((n) => n.name === nodeName);\n\tconst model = node?.parameters?.modelName || node.parameters?.model || node.parameters?.name;\n\tfindTokenUsageRecursively(exec, model);\n}\n\n// Procesar cada nodo indicado\nfor (const key of keysToSearch) {\n\tconst executions = data.data?.resultData?.runData?.[key];\n\tif (!executions) continue;\n\n\tif (Array.isArray(executions)) {\n\t\texecutions.forEach((exec) => processExecution(exec, key));\n\t} else if (typeof executions === 'object') {\n\t\tprocessExecution(executions, key);\n\t}\n}\n\nreturn [\n\t{\n\t\tjson: {\n\t\t\ttotals,\n\t\t\tmodels: Array.from(models),\n\t\t\tdetailedUsages,\n\t\t},\n\t\tpairedItem: [{ item: 0 }] \n\t},\n];\n"

},

"typeVersion": 2

},

{

"id": "65843cdf-a21e-428d-b67f-14a5eaba4573",

"name": "Sticky Note",

"type": "n8n-nodes-base.stickyNote",

"position": [

880,

-368

],

"parameters": {

"width": 448,

"height": 384,

"content": "### Sticky Note (Español)\n- ⚠️ Ejecutar siempre al final del workflow \n- ⚙️ Desactivar “Esperar a que termine” \n- 🆔 Requiere `execution_id` y `model_names` (array nodos AI) \n- 📊 Extrae métricas de uso de tokens y modelos usados\n\n---\n\n### Sticky Note (English)\n- ⚠️ Always run at the end of the workflow \n- ⚙️ Disable “Wait until finished” \n- 🆔 Requires `execution_id` and `model_names` (AI node array) \n- 📊 Extracts token usage metrics and used models\n"

},

"typeVersion": 1

},

{

"id": "a17a8cdd-2e14-4c41-93cd-e484992ae53b",

"name": "Execute Workflow",

"type": "n8n-nodes-base.executeWorkflow",

"position": [

624,

-112

],

"parameters": {

"options": {

"waitForSubWorkflow": false

},

"workflowId": {

"__rl": true,

"mode": "list",

"value": "6NVerEoYAOMrSF7k",

"cachedResultName": "workflow shared"

},

"workflowInputs": {

"value": {

"model_names": "={{ [\"gemini\", \"openai\"] }}",

"excecution_id": "={{ $execution.id }}"

},

"schema": [

{

"id": "excecution_id",

"type": "string",

"display": true,

"required": false,

"displayName": "excecution_id",

"defaultMatch": false,

"canBeUsedToMatch": true

},

{

"id": "model_names",

"type": "array",

"display": true,

"required": false,

"displayName": "model_names",

"defaultMatch": false,

"canBeUsedToMatch": true

}

],

"mappingMode": "defineBelow",

"matchingColumns": [],

"attemptToConvertTypes": false,

"convertFieldsToString": true

}

},

"typeVersion": 1.2

},

{

"id": "28b2d6d1-ea89-48fd-a0b5-930ff7cb130c",

"name": "When chat message received",

"type": "@n8n/n8n-nodes-langchain.chatTrigger",

"position": [

-64,

-104

],

"webhookId": "69dd7dd8-912e-4c4f-a901-4903cb8e63d1",

"parameters": {

"options": {}

},

"typeVersion": 1.3

},

{

"id": "a9bfcd5e-38f2-414a-80ba-cece8fd2d318",

"name": "AI Agent",

"type": "@n8n/n8n-nodes-langchain.agent",

"position": [

216,

-208

],

"parameters": {

"options": {},

"needsFallback": true

},

"typeVersion": 2.2

},

{

"id": "d6e22621-3dfb-4990-80b3-74651fbeb35e",

"name": "gemini",

"type": "@n8n/n8n-nodes-langchain.lmChatGoogleGemini",

"position": [

160,

16

],

"parameters": {

"options": {}

},

"credentials": {

"googlePalmApi": {

"id": "MN9FNbuuH1m00o1N",

"name": "Google Gemini(PaLM) Api account"

}

},

"typeVersion": 1

},

{

"id": "6243ac56-332d-4444-9597-9e53364e9a76",

"name": "openai",

"type": "@n8n/n8n-nodes-langchain.lmChatOpenAi",

"position": [

288,

16

],

"parameters": {

"model": {

"__rl": true,

"mode": "list",

"value": "gpt-4.1-mini"

},

"options": {}

},

"credentials": {

"openAiApi": {

"id": "MX73kGdthlmEU4D3",

"name": "OpenAi account"

}

},

"typeVersion": 1.2

},

{

"id": "61f5c198-03e5-4941-897f-1756432583b7",

"name": "Simple Memory",

"type": "@n8n/n8n-nodes-langchain.memoryBufferWindow",

"position": [

416,

16

],

"parameters": {},

"typeVersion": 1.3

}

],

"pinData": {},

"connections": {

"Start": {

"main": [

[

{

"node": "Get an execution",

"type": "main",

"index": 0

}

]

]

},

"gemini": {

"ai_languageModel": [

[

{

"node": "AI Agent",

"type": "ai_languageModel",

"index": 0

}

]

]

},

"openai": {

"ai_languageModel": [

[

{

"node": "AI Agent",

"type": "ai_languageModel",

"index": 1

}

]

]

},

"AI Agent": {

"main": [

[

{

"node": "Execute Workflow",

"type": "main",

"index": 0

}

]

]

},

"map json": {

"main": [

[]

]

},

"Simple Memory": {

"ai_memory": [

[

{

"node": "AI Agent",

"type": "ai_memory",

"index": 0

}

]

]

},

"Get an execution": {

"main": [

[

{

"node": "map json",

"type": "main",

"index": 0

}

]

]

},

"When chat message received": {

"main": [

[

{

"node": "AI Agent",

"type": "main",

"index": 0

}

]

]

}

}

}