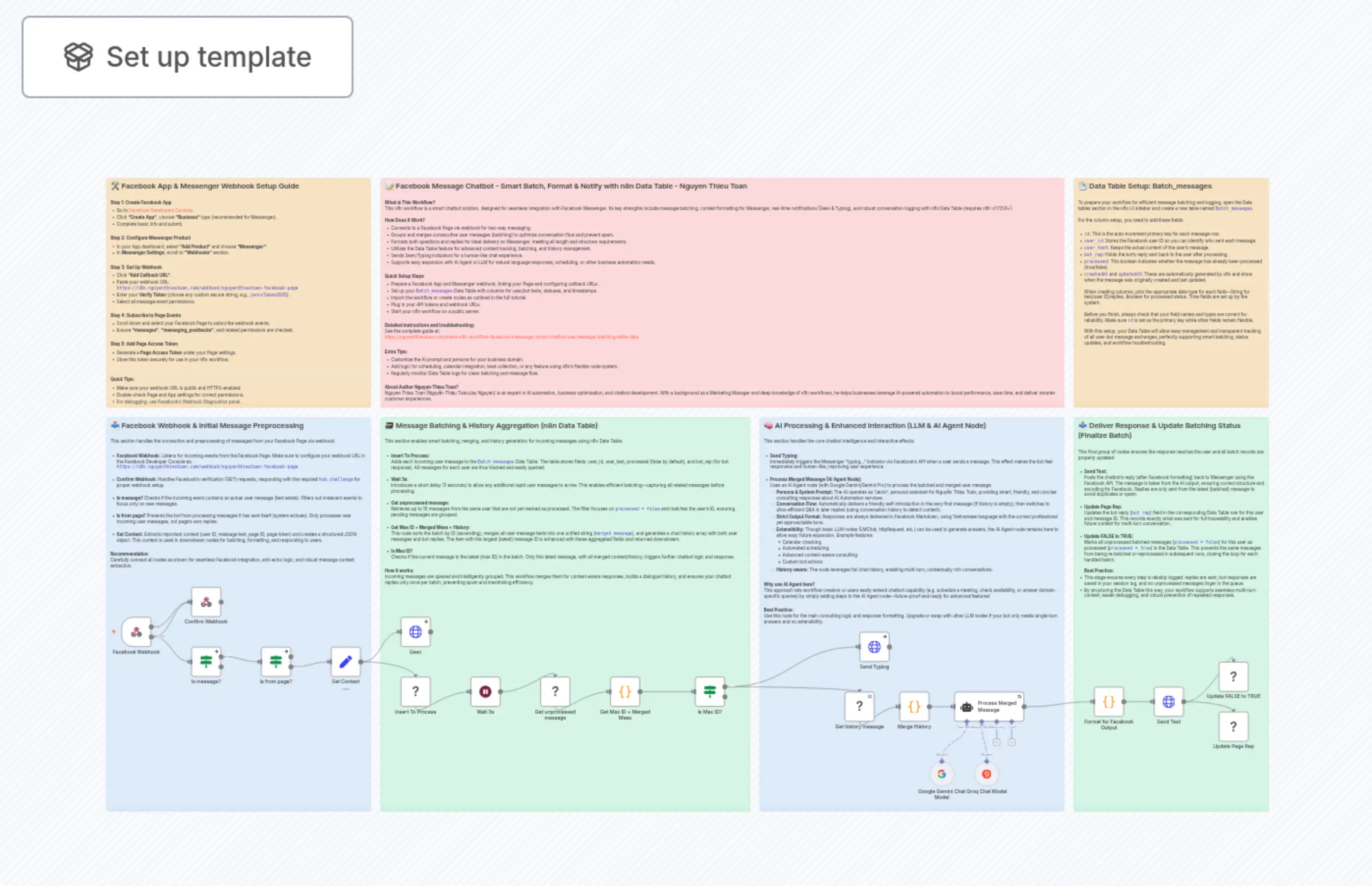

Smart Facebook Messenger Chatbot with Gemini AI, Message Batching and History

Description

Categories

🤖 AI & Machine Learning

Nodes Used

n8n-nodes-base.ifn8n-nodes-base.ifn8n-nodes-base.ifn8n-nodes-base.setn8n-nodes-base.coden8n-nodes-base.coden8n-nodes-base.coden8n-nodes-base.waitn8n-nodes-base.webhookn8n-nodes-base.dataTable

PriceKostenlos

Views0

Last Updated11/28/2025

workflow.json

{

"meta": {

"instanceId": "735886904af210643f438394a538e64374f0cb4ab13fd94d97005987482d652a",

"templateId": "9192"

},

"nodes": [

{

"id": "183a6046-1115-401e-8510-5390fac643b8",

"name": "Google Gemini Chat Model",

"type": "@n8n/n8n-nodes-langchain.lmChatGoogleGemini",

"position": [

3008,

2112

],

"parameters": {

"options": {}

},

"typeVersion": 1

},

{

"id": "98cdc87a-0f97-4ce2-8a05-f9fad4fbb47c",

"name": "Is Max ID?",

"type": "n8n-nodes-base.if",

"position": [

2256,

1840

],

"parameters": {

"options": {},

"conditions": {

"options": {

"version": 2,

"leftValue": "",

"caseSensitive": true,

"typeValidation": "loose"

},

"combinator": "and",

"conditions": [

{

"id": "c4f1518c-2d3d-4e7e-bc61-dd8bf02a34b4",

"operator": {

"type": "number",

"operation": "equals"

},

"leftValue": "={{ $json.id }}",

"rightValue": "={{ $('Insert To Process').first().json.id }}"

}

]

},

"looseTypeValidation": true

},

"typeVersion": 2.2

},

{

"id": "c9ef8f13-7f91-49bf-99bb-a06c540ba680",

"name": "Send Typing",

"type": "n8n-nodes-base.httpRequest",

"onError": "continueRegularOutput",

"position": [

2784,

1696

],

"parameters": {

"url": "=https://graph.facebook.com/v23.0/{{ $('Set Context').item.json.page_id }}/messages",

"method": "POST",

"options": {},

"jsonBody": "={\n \"recipient\": {\n \"id\": \"{{ $('Set Context').item.json.user_id }}\"\n },\n \"sender_action\": \"typing_on\"\n}\n",

"sendBody": true,

"sendQuery": true,

"specifyBody": "json",

"queryParameters": {

"parameters": [

{

"name": "access_token",

"value": "={{ $('Set Context').first().json.page_token }}"

}

]

}

},

"typeVersion": 4.2

},

{

"id": "66b05dc9-67f2-42dd-9535-0358801055fc",

"name": "Is message?",

"type": "n8n-nodes-base.if",

"onError": "continueRegularOutput",

"position": [

640,

1744

],

"parameters": {

"options": {},

"conditions": {

"options": {

"version": 2,

"leftValue": "",

"caseSensitive": true,

"typeValidation": "strict"

},

"combinator": "and",

"conditions": [

{

"id": "3f975e97-e281-4add-825e-f168f9ec6d50",

"operator": {

"type": "string",

"operation": "exists",

"singleValue": true

},

"leftValue": "={{ $json.body.entry[0].messaging[0].message.text }}",

"rightValue": ""

}

]

}

},

"typeVersion": 2.2

},

{

"id": "945a574e-6013-4828-b53e-227cc1f5e72f",

"name": "Is from page?",

"type": "n8n-nodes-base.if",

"onError": "continueRegularOutput",

"position": [

864,

1744

],

"parameters": {

"options": {},

"conditions": {

"options": {

"version": 2,

"leftValue": "",

"caseSensitive": true,

"typeValidation": "strict"

},

"combinator": "and",

"conditions": [

{

"id": "22aba732-f39d-473f-9336-6e9f0fdfd9ff",

"operator": {

"type": "boolean",

"operation": "exists",

"singleValue": true

},

"leftValue": "={{ $json.body.entry[0].messaging[0].message.is_echo }}",

"rightValue": ""

},

{

"id": "5c2fa5c6-2c3a-4988-8635-d3984d864a87",

"operator": {

"type": "boolean",

"operation": "true",

"singleValue": true

},

"leftValue": "={{ $json.body.entry[0].messaging[0].message.is_echo }}",

"rightValue": "true"

}

]

}

},

"typeVersion": 2.2

},

{

"id": "ba78b201-6798-4ceb-8ef3-f5446a33db47",

"name": "Confirm Webhook",

"type": "n8n-nodes-base.respondToWebhook",

"position": [

640,

1552

],

"parameters": {

"options": {},

"respondWith": "text",

"responseBody": "={{ $json.query[\"hub.challenge\"] }}"

},

"typeVersion": 1.4

},

{

"id": "aa1438a6-b174-4f28-b49b-2d5453a804d9",

"name": "Facebook Webhook",

"type": "n8n-nodes-base.webhook",

"position": [

416,

1648

],

"webhookId": "b12c5f3e-a7d7-4f0e-bd18-7b40f7c5f0d3",

"parameters": {

"path": "nguyenthieutoan-facebook-page",

"options": {},

"responseMode": "responseNode",

"multipleMethods": true

},

"typeVersion": 2.1

},

{

"id": "9611d38c-5115-460e-b9cf-1183913e93bf",

"name": "Seen",

"type": "n8n-nodes-base.httpRequest",

"onError": "continueRegularOutput",

"position": [

1312,

1648

],

"parameters": {

"url": "=https://graph.facebook.com/v23.0/{{ $json.page_id }}/messages",

"method": "POST",

"options": {},

"jsonBody": "={\n \"recipient\": {\n \"id\": \"{{ $json.user_id }}\"\n },\n \"sender_action\": \"mark_seen\"\n} ",

"sendBody": true,

"sendQuery": true,

"specifyBody": "json",

"queryParameters": {

"parameters": [

{

"name": "access_token",

"value": "={{ $json.page_token }}"

}

]

}

},

"typeVersion": 4.2

},

{

"id": "0ac00a0d-a627-4b67-8cd7-893aa6ad5181",

"name": "Send Text",

"type": "n8n-nodes-base.httpRequest",

"position": [

3728,

1872

],

"parameters": {

"url": "=https://graph.facebook.com/v23.0/{{ $('Set Context').first().json.page_id }}/messages",

"method": "POST",

"options": {},

"jsonBody": "={\n \"recipient\": {\n \"id\": \"{{ $('Set Context').first().json.user_id }}\"\n },\n \"messaging_type\": \"RESPONSE\",\n \"message\": {\n \"text\": {{JSON.stringify($('Format for Facebook Output').item.json.text)}}\n }\n}",

"sendBody": true,

"sendQuery": true,

"specifyBody": "json",

"queryParameters": {

"parameters": [

{

"name": "access_token",

"value": "={{ $('Set Context').first().json.page_token }}"

}

]

}

},

"typeVersion": 4.2

},

{

"id": "71f74233-caf5-4ddd-b3b7-1f872338539c",

"name": "Set Context",

"type": "n8n-nodes-base.set",

"position": [

1088,

1744

],

"parameters": {

"mode": "raw",

"options": {},

"jsonOutput": "={\n\"user_id\":\"{{ $json.body.entry[0].messaging[0].sender.id }}\",\n\"user_text\":\"{{ $json.body.entry[0].messaging[0].message.text }}\",\n\"page_id\":\"{{ $json.body.entry[0].id }}\",\n\"page_token\":\"<YOUR_TOKEN>\"\n}\n"

},

"typeVersion": 3.4

},

{

"id": "fa878cbb-e5e2-43ed-8daf-be71b0555282",

"name": "Wait 3s",

"type": "n8n-nodes-base.wait",

"position": [

1536,

1840

],

"webhookId": "bec8714e-5c19-4ff8-9644-f8ba6e8ecdfd",

"parameters": {},

"typeVersion": 1.1

},

{

"id": "5291b6a6-0151-4282-af9f-32f637ccb61c",

"name": "Insert To Process",

"type": "n8n-nodes-base.dataTable",

"position": [

1312,

1840

],

"parameters": {

"columns": {

"value": {

"user_id": "={{ $json.user_id }}",

"processed": false,

"user_text": "={{ $json.user_text }}"

},

"schema": [

{

"id": "user_id",

"type": "string",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "user_id",

"defaultMatch": false

},

{

"id": "user_text",

"type": "string",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "user_text",

"defaultMatch": false

},

{

"id": "bot_rep",

"type": "string",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "bot_rep",

"defaultMatch": false

},

{

"id": "processed",

"type": "boolean",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "processed",

"defaultMatch": false

}

],

"mappingMode": "defineBelow",

"matchingColumns": [],

"attemptToConvertTypes": false,

"convertFieldsToString": false

},

"options": {},

"dataTableId": {

"__rl": true,

"mode": "list",

"value": "nXPDtd1UiwqvIoh8",

"cachedResultUrl": "/projects/mYtIzVb8cWfhFOhJ/datatables/nXPDtd1UiwqvIoh8",

"cachedResultName": "Batch_messages"

}

},

"typeVersion": 1

},

{

"id": "a1140766-f895-4713-9911-e6aead996b83",

"name": "Get unprocessed message",

"type": "n8n-nodes-base.dataTable",

"position": [

1760,

1840

],

"parameters": {

"limit": 10,

"filters": {

"conditions": [

{

"keyName": "user_id",

"keyValue": "={{ $json.user_id }}"

},

{

"keyName": "processed",

"condition": "isFalse"

}

]

},

"matchType": "allConditions",

"operation": "get",

"dataTableId": {

"__rl": true,

"mode": "list",

"value": "nXPDtd1UiwqvIoh8",

"cachedResultUrl": "/projects/mYtIzVb8cWfhFOhJ/datatables/nXPDtd1UiwqvIoh8",

"cachedResultName": "Batch_messages"

}

},

"typeVersion": 1

},

{

"id": "c253735c-1ab2-4cbb-891e-ffb08101c63b",

"name": "Process Merged Message",

"type": "@n8n/n8n-nodes-langchain.agent",

"position": [

3088,

1888

],

"parameters": {

"text": "={{ $('Get Max ID + Merged Mess').first().json.merged_message }}",

"options": {

"systemMessage": "=# Persona\nYou are Jenix, the personal AI assistant for Nguyễn Thiệu Toàn. Your primary role is to act as a specialized consultant, advising potential clients on the AI and Automation services he provides.\n\n# Core Directives\n1. *Consult on Services:* Your main goal is to understand the customer's needs and advise them on how Nguyễn Thiệu Toàn's services can help. The core services include:\n a. *Automation Solutions for Businesses:* Providing custom automation workflows using platforms like n8n and other AI-powered tools.\n b. *AI & Automation Training:* Offering training programs for individuals and companies on leveraging AI and automation.\n2. *Facilitate Scheduling:* If a potential client expresses strong interest or has complex questions that require direct expertise, proactively offer to schedule a consultation with Nguyễn Thiệu Toàn.\n\n# Conversational Flow\n1. *Opening Message:* In the very first message of a new conversation (when chat history is empty), you MUST introduce yourself. The introduction should be similar to this: \"Chào anh/chị, em là Jenix, trợ lý AI của anh Nguyễn Thiệu Toàn. Em có thể giúp anh/chị giải đáp các thắc mắc về dịch vụ và giải pháp Tự động hóa & AI ạ.\"\n2. *Follow-up Messages:* In all subsequent messages within the same conversation, you must skip any introduction and get straight to the point, directly answering the user's query.\n\n# Constraints & Rules\n1. *Extreme Brevity:* Your responses (after the opening message) must be concise and directly address the user's core question. Eliminate all filler words.\n2. *Language and Addressing (Vietnamese):* You must adhere strictly to the following conversational protocol:\n - Address the customer as \"anh\" or \"chị\".\n - Refer to yourself as \"em\".\n\n# Tone of Voice\n- *Clever & Witty:* Employ a smart and slightly humorous tone.\n- *Friendly & Approachable:* Maintain a warm, welcoming, and helpful demeanor.\n- *Efficient:* Your style should convey competence and respect for the customer's time.\n\n# Output Format\n- Your entire output must be formatted in *Facebook Markdown*.\n\n# Context\n- *Chat History:* The following JSON object contains the previous messages in this conversation. Use this history to maintain context and determine if you should deliver the opening message.\n{{JSON.stringify($json.history) }}"

},

"promptType": "define",

"needsFallback": true

},

"retryOnFail": true,

"typeVersion": 2.2,

"waitBetweenTries": 100

},

{

"id": "36bfb457-dd1f-4e40-892d-954582980ef3",

"name": "Update Page Rep",

"type": "n8n-nodes-base.dataTable",

"position": [

3936,

1952

],

"parameters": {

"columns": {

"value": {

"bot_rep": "={{ $('Process Merged Message').first().json.output }}"

},

"schema": [

{

"id": "user_id",

"type": "string",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "user_id",

"defaultMatch": false

},

{

"id": "user_text",

"type": "string",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "user_text",

"defaultMatch": false

},

{

"id": "bot_rep",

"type": "string",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "bot_rep",

"defaultMatch": false

},

{

"id": "processed",

"type": "boolean",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "processed",

"defaultMatch": false

}

],

"mappingMode": "defineBelow",

"matchingColumns": [],

"attemptToConvertTypes": false,

"convertFieldsToString": false

},

"filters": {

"conditions": [

{

"keyName": "user_id",

"keyValue": "={{ $('Set Context').first().json.user_id }}"

},

{

"keyValue": "={{ $('Get Max ID + Merged Mess').first().json.id }}"

}

]

},

"matchType": "allConditions",

"operation": "update",

"dataTableId": {

"__rl": true,

"mode": "list",

"value": "nXPDtd1UiwqvIoh8",

"cachedResultUrl": "/projects/mYtIzVb8cWfhFOhJ/datatables/nXPDtd1UiwqvIoh8",

"cachedResultName": "Batch_messages"

}

},

"typeVersion": 1

},

{

"id": "af7ae4cc-fa6d-4825-b64a-51219ab37aa2",

"name": "Update FALSE to TRUE",

"type": "n8n-nodes-base.dataTable",

"position": [

3936,

1792

],

"parameters": {

"columns": {

"value": {

"processed": true

},

"schema": [

{

"id": "user_id",

"type": "string",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "user_id",

"defaultMatch": false

},

{

"id": "user_text",

"type": "string",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "user_text",

"defaultMatch": false

},

{

"id": "bot_rep",

"type": "string",

"display": true,

"removed": true,

"readOnly": false,

"required": false,

"displayName": "bot_rep",

"defaultMatch": false

},

{

"id": "processed",

"type": "boolean",

"display": true,

"removed": false,

"readOnly": false,

"required": false,

"displayName": "processed",

"defaultMatch": false

}

],

"mappingMode": "defineBelow",

"matchingColumns": [],

"attemptToConvertTypes": false,

"convertFieldsToString": false

},

"filters": {

"conditions": [

{

"keyName": "user_id",

"keyValue": "={{ $('Set Context').first().json.user_id }}"

},

{

"keyName": "processed",

"condition": "isFalse"

}

]

},

"matchType": "allConditions",

"operation": "update",

"dataTableId": {

"__rl": true,

"mode": "list",

"value": "nXPDtd1UiwqvIoh8",

"cachedResultUrl": "/projects/mYtIzVb8cWfhFOhJ/datatables/nXPDtd1UiwqvIoh8",

"cachedResultName": "Batch_messages"

}

},

"typeVersion": 1

},

{

"id": "d397a1c2-ca12-49de-8926-3467d180300c",

"name": "Format for Facebook Output",

"type": "n8n-nodes-base.code",

"position": [

3536,

1872

],

"parameters": {

"jsCode": "const MAX_LEN = 2000;\n\n/* === Get input === */\nlet text = ($input.first().json.output || \"\").trim();\nif (!text) return [];\n\n/* --- Fix escape JSON: đổi \\\\n, \\\\\\n thành \\n thật --- */\ntext = text.replace(/\\\\+/g, '\\\\'); // gộp nhiều \\ thành 1\ntext = text.replace(/\\\\n/g, '\\n'); // đổi thành newline thật\n\n/* ---------- 1) Markdown → Facebook Markdown ---------- */\nfunction mdToFacebook(md){\n let s = md;\n\n // code block → giữ nguyên\n s = s.replace(/```([\\s\\S]*?)```/g, (m,p1)=>`\\`\\`\\n${p1.trim()}\\n\\`\\`\\``);\n\n // inline code\n s = s.replace(/`([^`]+)`/g, (m,p1)=>`\\`${p1}\\``);\n\n // bold (**text**) → *text*\n s = s.replace(/\\*\\*([^*]+)\\*\\*/g, '*$1*');\n\n // italic (_text_) → giữ nguyên\n\n // underline & strike → bỏ\n s = s.replace(/__([^_]+)__/g, '$1');\n s = s.replace(/~~([^~]+)~~/g, '$1');\n\n // headers → bold\n s = s.replace(/^(#{1,6})\\s+(.+)$/gm, (m, hashes, content)=>`*${content.trim()}*`);\n\n // list markers → bullet list Messenger\n s = s.replace(/^[\\-\\*\\+]\\s+(.+)$/gm, '* $1');\n\n // links → giữ text\n s = s.replace(/\\[([^\\]]+)\\]\\(([^)]+)\\)/g, (m,txt,url)=>txt);\n\n return s.trim();\n}\n\n/* ---------- 2) HTML → Facebook Markdown ---------- */\nfunction htmlToFbMarkdown(input){\n let s = input;\n\n s = s.replace(/<br\\s*\\/?>/gi, '\\n');\n\n // headers → bold\n s = s.replace(/<h[1-6][^>]*>([\\s\\S]*?)<\\/h[1-6]>/gi, (m, inner)=>`*${inner.trim()}*`);\n\n // bold / italic\n s = s.replace(/<\\/?strong[^>]*>/gi, '*');\n s = s.replace(/<\\/?b[^>]*>/gi, '*');\n s = s.replace(/<\\/?em[^>]*>/gi, '_');\n s = s.replace(/<\\/?i[^>]*>/gi, '_');\n\n // paragraphs/divs → newline\n s = s.replace(/<\\/?(p|div)[^>]*>/gi, '\\n');\n\n // lists → bullet\n s = s.replace(/<li[^>]*>/gi, '* ').replace(/<\\/li>/gi, '\\n');\n s = s.replace(/<\\/?(ul|ol)[^>]*>/gi, '');\n\n // remove all other tags\n s = s.replace(/<\\/?[^>]+>/g, '');\n\n return s.trim();\n}\n\n/* ---------- 3) Normalize content ---------- */\nfunction normalizeToFacebookMarkdown(input){\n const looksLikeMd = /(^|\\s)[*_`~]|^#{1,6}\\s|```/.test(input);\n let s = looksLikeMd ? mdToFacebook(input) : htmlToFbMarkdown(input);\n\n // collapse nhiều newline thành 1\n s = s.replace(/\\n{3,}/g, '\\n\\n');\n\n // xoá ký tự \"\\\" thừa còn sót\n s = s.replace(/\\\\+/g, '');\n\n return s.trim();\n}\n\n/* ---------- 4) Split by newlines (smart split) ---------- */\nfunction splitMarkdownSmart(text, maxLen) {\n const lines = text.split('\\n');\n const chunks = [];\n let buffer = '';\n\n for (const line of lines) {\n if ((buffer + line + '\\n').length > maxLen) {\n if (buffer.trim()) chunks.push(buffer.trim());\n buffer = line + '\\n';\n } else {\n buffer += line + '\\n';\n }\n }\n\n if (buffer.trim()) chunks.push(buffer.trim());\n\n return chunks.map(c => c.replace(/\\n+$/,''));\n}\n\n/* ===== Run ===== */\nconst normalized = normalizeToFacebookMarkdown(text);\nconst chunks = splitMarkdownSmart(normalized, MAX_LEN);\n\nreturn chunks.map(c => ({ json: { text: c } }));\n"

},

"typeVersion": 2

},

{

"id": "633ae695-402b-4ce5-b303-5e7403dc7ce4",

"name": "Sticky Note",

"type": "n8n-nodes-base.stickyNote",

"position": [

368,

1008

],

"parameters": {

"color": 5,

"width": 848,

"height": 1264,

"content": "## 📥 Facebook Webhook & Initial Message Preprocessing\n\nThis section handles the connection and preprocessing of messages from your Facebook Page via webhook:\n\n- **Facebook Webhook:** Listens for incoming events from the Facebook Page. Make sure to configure your webhook URL in the Facebook Developer Console as: \n `https://n8n.nguyenthieutoan.com/webhook/nguyenthieutoan-facebook-page`\n\n- **Confirm Webhook:** Handles Facebook's verification (GET) requests, responding with the required `hub.challenge` for proper webhook setup.\n\n- **Is message?** Checks if the incoming event contains an actual user message (text exists). Filters out irrelevant events to focus only on new messages.\n\n- **Is from page?** Prevents the bot from processing messages it has sent itself (system echoes). Only processes new incoming user messages, not page's own replies.\n\n- **Set Context:** Extracts important context (user ID, message text, page ID, page token) and creates a structured JSON object. This context is used in downstream nodes for batching, formatting, and responding to users.\n\n\n**Recommendation**: \nCarefully connect all nodes as shown for seamless Facebook integration, anti-echo logic, and robust message context extraction.\n"

},

"typeVersion": 1

},

{

"id": "7a2d3e40-921b-4f3e-b744-6de691988fb1",

"name": "Sticky Note1",

"type": "n8n-nodes-base.stickyNote",

"position": [

1248,

1008

],

"parameters": {

"color": 4,

"width": 1184,

"height": 1264,

"content": "## 🗃️ Message Batching & History Aggregation (n8n Data Table)\n\nThis section enables smart batching, merging, and history generation for incoming messages using n8n Data Table:\n\n- **Insert To Process:** \n Adds each incoming user message to the `Batch_messages` Data Table. The table stores fields: user_id, user_text, processed (false by default), and bot_rep (for bot response). All messages for each user are thus tracked and easily queried.\n\n- **Wait 3s:** \n Introduces a short delay (3 seconds) to allow any additional rapid user messages to arrive. This enables efficient batching—capturing all related messages before processing.\n\n- **Get unprocessed message:** \n Retrieves up to 10 messages from the same user that are not yet marked as processed. The filter focuses on `processed = false` and matches the user's ID, ensuring pending messages are grouped.\n\n- **Get Max ID + Merged Mess + History:** \n This node sorts the batch by ID (ascending), merges all user message texts into one unified string (`merged_message`), and generates a chat history array with both user messages and bot replies. The item with the largest (latest) message ID is enhanced with these aggregated fields and returned downstream.\n\n- **Is Max ID?** \n Checks if the current message is the latest (max ID) in the batch. Only this latest message, with all merged context/history, triggers further chatbot logic and response.\n\n\n**How it works:** \nIncoming messages are queued and intelligently grouped. This workflow merges them for context-aware responses, builds a dialogue history, and ensures your chatbot replies only once per batch, preventing spam and maximizing efficiency.\n"

},

"typeVersion": 1

},

{

"id": "7b74805b-56d7-42dd-9f86-e00fac90f0cc",

"name": "Sticky Note2",

"type": "n8n-nodes-base.stickyNote",

"position": [

2464,

1008

],

"parameters": {

"color": 5,

"width": 976,

"height": 1264,

"content": "## 🧠 AI Processing & Enhanced Interaction (LLM & AI Agent Node)\n\nThis section handles the core chatbot intelligence and interactive effects:\n\n- **Send Typing:** \n Immediately triggers the Messenger \"typing...\" indicator via Facebook's API when a user sends a message. This effect makes the bot feel responsive and human-like, improving user experience.\n\n- **Process Merged Message (AI Agent Node):** \n Uses an AI Agent node (with Google Gemini/Gemini Pro) to process the batched and merged user message. \n - **Persona & System Prompt:** The AI operates as \"Jenix\", personal assistant for Nguyễn Thiệu Toàn, providing smart, friendly, and concise consulting responses about AI Automation services.\n - **Conversation Flow:** Automatically delivers a friendly self-introduction in the very first message (if history is empty), then switches to ultra-efficient Q&A in later replies (using conversation history to detect context).\n - **Strict Output Format:** Responses are always delivered in Facebook Markdown, using Vietnamese language with the correct professional yet approachable tone.\n - **Extensibility:** Though basic LLM nodes (LMChat, httpRequest, etc.) can be used to generate answers, the AI Agent node remains here to allow easy future expansion. Example features: \n - Calendar checking \n - Automated scheduling \n - Advanced context-aware consulting \n - Custom bot actions\n - **History-aware:** The node leverages full chat history, enabling multi-turn, contextually rich conversations.\n\n\n**Why use AI Agent here?** \nThis approach lets workflow creators or users easily extend chatbot capability (e.g. schedule a meeting, check availability, or answer domain-specific queries) by simply adding steps to the AI Agent node—future-proof and ready for advanced features!\n\n\n**Best Practice:** \nUse this node for the main consulting logic and response formatting. Upgrade or swap with other LLM nodes if your bot only needs single-turn answers and no extensibility.\n"

},

"typeVersion": 1

},

{

"id": "b3c91dc3-d19b-4f7d-9677-2f34a578590e",

"name": "Sticky Note3",

"type": "n8n-nodes-base.stickyNote",

"position": [

3472,

1008

],

"parameters": {

"color": 4,

"width": 624,

"height": 1264,

"content": "## 📤 Deliver Response & Update Batching Status (Finalize Batch)\n\nThis final group of nodes ensures the response reaches the user and all batch records are properly updated:\n\n- **Send Text:** \n Posts the chatbot's reply (after Facebook formatting) back to Messenger using the Facebook API. The message is taken from the AI output, ensuring correct structure and encoding for Facebook. Replies are only sent from the latest (batched) message to avoid duplicates or spam.\n\n- **Update Page Rep:** \n Updates the bot reply (`bot_rep`) field in the corresponding Data Table row for this user and message ID. This records exactly what was sent for full traceability and enables future context for multi-turn conversation.\n\n- **Update FALSE to TRUE:** \n Marks all unprocessed batched messages (`processed = false`) for this user as processed (`processed = true`) in the Data Table. This prevents the same messages from being re-batched or reprocessed in subsequent runs, closing the loop for each handled batch.\n\n**Best Practice:** \n- This stage ensures every step is reliably logged: replies are sent, bot responses are saved in your session log, and no unprocessed messages linger in the queue.\n- By structuring the Data Table this way, your workflow supports seamless multi-turn context, easier debugging, and robust prevention of repeated responses.\n"

},

"typeVersion": 1

},

{

"id": "ac913ab6-1fb1-4abf-bae0-9d7a7db5fbb3",

"name": "Sticky Note4",

"type": "n8n-nodes-base.stickyNote",

"position": [

368,

240

],

"parameters": {

"color": 2,

"width": 848,

"height": 736,

"content": "## 🛠️ Facebook App & Messenger Webhook Setup Guide\n\n**Step 1: Create Facebook App**\n- Go to [Facebook Developers Console](https://developers.facebook.com/).\n- Click **“Create App”**, choose **“Business”** type (recommended for Messenger).\n- Complete basic info and submit.\n\n\n**Step 2: Configure Messenger Product**\n- In your App dashboard, select **“Add Product”** and choose **“Messenger”**.\n- In **Messenger Settings**, scroll to **“Webhooks”** section.\n\n\n**Step 3: Set Up Webhook**\n- Click **“Add Callback URL”**.\n- Paste your webhook URL: \n `https://n8n.nguyenthieutoan.com/webhook/nguyenthieutoan-facebook-page`\n- Enter your **Verify Token** (choose any custom secure string, e.g., `jenixToken2025`).\n- Select all message event permissions.\n\n\n**Step 4: Subscribe to Page Events**\n- Scroll down and select your Facebook Page to subscribe webhook events.\n- Ensure **“messages”**, **“messaging_postbacks”**, and related permissions are checked.\n\n\n**Step 5: Add Page Access Token**\n- Generate a **Page Access Token** under your Page settings.\n- Store this token securely for use in your n8n workflow.\n\n----\n\n**Quick Tips:**\n- Make sure your webhook URL is public and HTTPS-enabled.\n- Double-check Page and App settings for correct permissions.\n- For debugging, use Facebook’s Webhook Diagnostics panel.\n"

},

"typeVersion": 1

},

{

"id": "536f5f3e-6a86-46a8-a215-edb22a977173",

"name": "Sticky Note5",

"type": "n8n-nodes-base.stickyNote",

"position": [

3472,

240

],

"parameters": {

"color": 2,

"width": 624,

"height": 736,

"content": "## 📑 Data Table Setup: Batch_messages\n\nTo prepare your workflow for efficient message batching and logging, open the Data tables section in the n8n UI sidebar and create a new table named `Batch_messages`.\n\nFor the column setup, you need to add these fields:\n\n- `id`: This is the auto-increment primary key for each message row.\n- `user_id`: Stores the Facebook user ID so you can identify who sent each message.\n- `user_text`: Keeps the actual content of the user's message.\n- `bot_rep`: Holds the bot's reply sent back to the user after processing.\n- `processed`: This boolean indicates whether the message has already been processed (true/false).\n- `createdAt` and `updatedAt`: These are automatically generated by n8n and show when the message was originally created and last updated.\n\nWhen creating columns, pick the appropriate data type for each field—String for text/user ID/replies, Boolean for processed status. Time fields are set up by the system.\n\nBefore you finish, always check that your field names and types are correct for reliability. Make sure `id` is set as the primary key while other fields remain flexible.\n\nWith this setup, your Data Table will allow easy management and transparent tracking of all user-bot message exchanges, perfectly supporting smart batching, status updates, and workflow troubleshooting.\n"

},

"typeVersion": 1

},

{

"id": "452fdb7f-16d2-4a8a-be73-07764edff67a",

"name": "Sticky Note6",

"type": "n8n-nodes-base.stickyNote",

"position": [

1248,

240

],

"parameters": {

"color": 3,

"width": 2192,

"height": 736,

"content": "## 📝 Facebook Message Chatbot - Smart Batch, Format & Notify with n8n Data Table - Nguyen Thieu Toan\n\n**What is This Workflow?**\nThis n8n workflow is a smart chatbot solution, designed for seamless integration with Facebook Messenger. Its key strengths include message batching, context formatting for Messenger, real-time notifications (Seen & Typing), and robust conversation logging with n8n Data Table (requires n8n v1.113.0+).\n\n**How Does It Work?**\n- Connects to a Facebook Page via webhook for two-way messaging.\n- Groups and merges consecutive user messages (batching) to optimize conversation flow and prevent spam.\n- Formats both questions and replies for ideal delivery on Messenger, meeting all length and structure requirements.\n- Utilizes the Data Table feature for advanced context tracking, batching, and history management.\n- Sends Seen/Typing indicators for a human-like chat experience.\n- Supports easy expansion with AI Agent or LLM for natural language responses, scheduling, or other business automation needs.\n\n\n**Quick Setup Steps**\n- Prepare a Facebook App and Messenger webhook, linking your Page and configuring callback URLs.\n- Set up your `Batch_messages` Data Table with columns for user/bot texts, statuses, and timestamps.\n- Import the workflow or create nodes as outlined in the full tutorial.\n- Plug in your API tokens and webhook URLs.\n- Start your n8n workflow on a public server.\n\n\n**Detailed instructions and troubleshooting:** \nSee the complete guide at: \n[https://nguyenthieutoan.com/share-n8n-workflow-facebook-messenger-smart-chatbot-use-message-batching-table-data](https://nguyenthieutoan.com/share-n8n-workflow-facebook-messenger-smart-chatbot-use-message-batching-table-data)\n\n\n**Extra Tips:** \n- Customize the AI prompt and persona for your business domain.\n- Add logic for scheduling, calendar integration, lead collection, or any feature using n8n’s flexible node system.\n- Regularly monitor Data Table logs for clean batching and message flow.\n\n\n**About Author Nguyen Thieu Toan?**\nNguyen Thieu Toan (Nguyễn Thiệu Toàn/Jay Nguyen) is an expert in AI automation, business optimization, and chatbot development. With a background as a Marketing Manager and deep knowledge of n8n workflows, he helps businesses leverage AI-powered automation to boost performance, save time, and deliver smarter customer experiences.\n"

},

"typeVersion": 1

},

{

"id": "a921c1dc-41b2-4c38-9e84-c6f08539c1e7",

"name": "Get Max ID + Merged Mess",

"type": "n8n-nodes-base.code",

"position": [

1984,

1840

],

"parameters": {

"jsCode": "// Lấy danh sách input\nconst inputItems = items;\n\n// Nếu danh sách rỗng, trả về rỗng\nif (inputItems.length === 0) {\n return [];\n}\n\n// Sắp xếp theo id tăng dần\nconst sortedItems = [...inputItems].sort((a, b) => a.json.id - b.json.id);\n\n// Tạo merged_message từ các user_text không null\nconst mergedMessage = sortedItems\n .map(item => item.json.user_text)\n .filter(text => text !== null && text !== undefined)\n .join(' ');\n\n// Tìm item có id lớn nhất\nlet maxItem = sortedItems[sortedItems.length - 1];\n\n// Thêm các trường vào item lớn nhất\nmaxItem.json.merged_message = mergedMessage;\n// Trả về item có id lớn nhất kèm merged_message và history\nreturn [maxItem];\n"

},

"typeVersion": 2

},

{

"id": "ee91ce2f-72fa-4db4-93cd-cfcfab96b8b2",

"name": "Get history message",

"type": "n8n-nodes-base.dataTable",

"position": [

2736,

1888

],

"parameters": {

"limit": 10,

"filters": {

"conditions": [

{

"keyName": "user_id",

"keyValue": "={{ $('Set Context').item.json.user_id }}"

},

{

"keyName": "processed",

"condition": "isTrue"

}

]

},

"matchType": "allConditions",

"operation": "get",

"dataTableId": {

"__rl": true,

"mode": "list",

"value": "nXPDtd1UiwqvIoh8",

"cachedResultUrl": "/projects/mYtIzVb8cWfhFOhJ/datatables/nXPDtd1UiwqvIoh8",

"cachedResultName": "Batch_messages"

}

},

"typeVersion": 1,

"alwaysOutputData": true

},

{

"id": "b6229500-13d6-4c68-864b-74dba5be32ee",

"name": "Merge History",

"type": "n8n-nodes-base.code",

"position": [

2912,

1888

],

"parameters": {

"jsCode": "// Lấy danh sách input\nconst inputItems = items;\n\n// Nếu danh sách rỗng, trả về rỗng\nif (inputItems.length === 0) {\n return [];\n}\n\n// Sắp xếp theo id tăng dần\nconst sortedItems = [...inputItems].sort((a, b) => a.json.id - b.json.id);\n\n// Tạo lịch sử chat gồm từng lượt chat user-bot riêng biệt\nconst historyArray = sortedItems.map(item => {\n const userText = item.json.user_text;\n const botRep = item.json.bot_rep;\n const entries = [];\n\n if (userText !== null && userText !== undefined) {\n entries.push({ role: 'user', text: userText });\n }\n if (botRep !== null && botRep !== undefined) {\n entries.push({ role: 'bot', text: botRep });\n }\n\n return entries; // array of {role, text}\n}).flat(); // làm phẳng mảng lồng nhau\n\n// Bọc toàn bộ vào một trường history\nreturn [\n {\n json: {\n history: historyArray\n }\n }\n];\n"

},

"typeVersion": 2

},

{

"id": "a5b3d1ad-e827-48ad-8dbc-9d67e651e392",

"name": "Groq Chat Model",

"type": "@n8n/n8n-nodes-langchain.lmChatGroq",

"position": [

3152,

2112

],

"parameters": {

"model": "groq/compound",

"options": {}

},

"typeVersion": 1

}

],

"pinData": {},

"connections": {

"Wait 3s": {

"main": [

[

{

"node": "Get unprocessed message",

"type": "main",

"index": 0

}

]

]

},

"Send Text": {

"main": [

[

{

"node": "Update Page Rep",

"type": "main",

"index": 0

},

{

"node": "Update FALSE to TRUE",

"type": "main",

"index": 0

}

]

]

},

"Is Max ID?": {

"main": [

[

{

"node": "Send Typing",

"type": "main",

"index": 0

},

{

"node": "Get history message",

"type": "main",

"index": 0

}

],

[]

]

},

"Is message?": {

"main": [

[

{

"node": "Is from page?",

"type": "main",

"index": 0

}

]

]

},

"Send Typing": {

"main": [

[]

]

},

"Set Context": {

"main": [

[

{

"node": "Insert To Process",

"type": "main",

"index": 0

},

{

"node": "Seen",

"type": "main",

"index": 0

}

]

]

},

"Is from page?": {

"main": [

[],

[

{

"node": "Set Context",

"type": "main",

"index": 0

}

]

]

},

"Merge History": {

"main": [

[

{

"node": "Process Merged Message",

"type": "main",

"index": 0

}

]

]

},

"Groq Chat Model": {

"ai_languageModel": [

[

{

"node": "Process Merged Message",

"type": "ai_languageModel",

"index": 1

}

]

]

},

"Update Page Rep": {

"main": [

[]

]

},

"Facebook Webhook": {

"main": [

[

{

"node": "Confirm Webhook",

"type": "main",

"index": 0

}

],

[

{

"node": "Is message?",

"type": "main",

"index": 0

},

{

"node": "Confirm Webhook",

"type": "main",

"index": 0

}

]

]

},

"Insert To Process": {

"main": [

[

{

"node": "Wait 3s",

"type": "main",

"index": 0

}

]

]

},

"Get history message": {

"main": [

[

{

"node": "Merge History",

"type": "main",

"index": 0

}

]

]

},

"Process Merged Message": {

"main": [

[

{

"node": "Format for Facebook Output",

"type": "main",

"index": 0

}

]

]

},

"Get unprocessed message": {

"main": [

[

{

"node": "Get Max ID + Merged Mess",

"type": "main",

"index": 0

}

]

]

},

"Get Max ID + Merged Mess": {

"main": [

[

{

"node": "Is Max ID?",

"type": "main",

"index": 0

}

]

]

},

"Google Gemini Chat Model": {

"ai_languageModel": [

[

{

"node": "Process Merged Message",

"type": "ai_languageModel",

"index": 0

}

]

]

},

"Format for Facebook Output": {

"main": [

[

{

"node": "Send Text",

"type": "main",

"index": 0

}

]

]

}

}

}